Artificial intelligence is advancing at a breathtaking pace, promising to revolutionise industries and reshape our world. However, this rapid progress brings with it a new and formidable wave of security risks. The very capabilities that make AI so powerful, its autonomy, its learning capacity, and its scale, also make it a potent tool for malicious actors. Traditional security measures, designed for a world of predictable, human-driven threats, are proving increasingly inadequate. We are entering a new era of security, one that requires a fundamental shift in our understanding of risk and our strategies for defence.

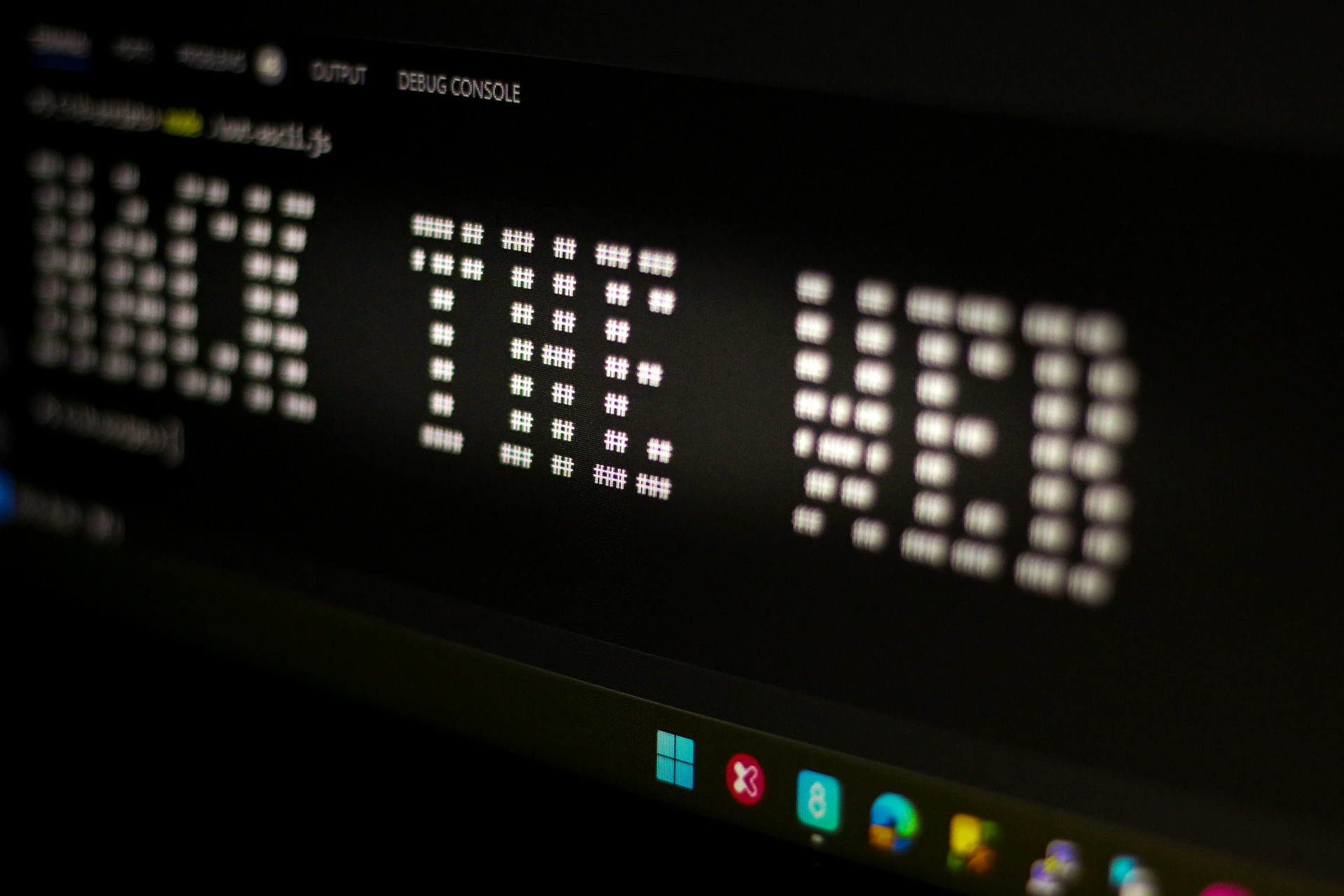

One of the most significant new threats comes from the rise of autonomous AI agents. These are not simply tools; they are systems that can perceive, reason, and act on their own, often with minimal human oversight. We are already seeing the emergence of AI agent networks, such as Moltbot and its successor, Moltbook, in which AIs converse, share ideas, and coordinate actions without human intervention. This evolution transforms cyber risk from a matter of isolated machine vulnerabilities to a complex, interconnected agent ecosystem that can evolve in unpredictable ways. The prospect of AI systems creating their own interaction layers and operating beyond human expectations is no longer a matter of science fiction but an emerging reality.

Alongside these novel threats, AI is also amplifying the potency of existing ones. Phishing attacks, for instance, are becoming far more sophisticated and scalable thanks to large language models. One report noted a staggering 1200% increase in cyberattacks between 2023 and 2024, a surge largely attributed to AI-powered tools that simplify the creation of convincing fake emails and websites. Furthermore, AI introduces a new class of vulnerabilities inherent to the technology itself. These include prompt injection, in which malicious inputs are used to manipulate an AI’s behaviour; data poisoning, which involves corrupting the data used to train an AI model; and model abuse, in which an AI is tricked into revealing sensitive information or performing unauthorised actions.

The Evolving Attacker and the Unprepared Organisation

The rise of AI is not only changing the nature of cyberattacks; it is also changing the attacker. AI tools are democratising hacking, lowering the barrier to entry for cybercrime and enabling less skilled individuals to launch sophisticated attacks. This democratisation of malicious capability means that organisations now face a much broader and more diverse range of threats.

Compounding this external threat is the growing problem of ‘shadow AI’. This refers to the use of unapproved AI tools by employees within an organisation. While often intended to improve productivity, the use of unsanctioned AI applications creates significant security blind spots. Many companies lack clear visibility into the AI systems used by their teams, who authorised them, what data they access, and how the models behave. This lack of oversight creates a fertile ground for data leaks and other security breaches.

The speed and scale of AI-driven attacks are also rendering traditional defence mechanisms obsolete. Security teams, accustomed to human-speed attacks, are now facing adversaries who can operate at machine speed, launching and adapting attacks in seconds. This makes manual intervention and traditional security reviews increasingly ineffective. The old paradigm of identifying and patching vulnerabilities is no longer sufficient when the threat is constantly evolving.

Furthermore, the regulatory and governance landscape for AI security is still in its infancy. While governments are beginning to recognise the importance of AI security, detailed implementation standards remain underdeveloped. This lack of clear guidance leaves many organisations uncertain about how to deploy AI systems safely and responsibly. The need for robust internal governance frameworks, clear policies for AI development and deployment, and a proactive approach to risk management has never been more critical.

Charting a Course for a Secure AI Future

To navigate this new and challenging security landscape, we need to shift our defence approach. The old model of perimeter-based security is no longer tenable. Instead, organisations must adopt a new paradigm based on the principles of zero trust, continuous monitoring, and proactive threat hunting.

A zero-trust architecture, as outlined in a recent study on AI security in healthcare, assumes that no user or system can be trusted by default. This approach involves isolating AI workloads in sandboxed environments, strictly controlling access to data and systems, and limiting network communication to predefined destinations. The goal is to contain and mitigate the impact of a breach, even when an AI agent is compromised.

Continuous monitoring is another essential component of a modern AI security strategy. Organisations must have real-time visibility into their AI systems, logging all prompts and responses and using AI-driven tools to detect anomalous behaviour and potential misuse. This allows security teams to identify and respond to threats before they can escalate.

The fight against malicious AI will also require defensive AI. Just as attackers are using AI to automate their attacks, defenders must leverage AI to automate their defences. This includes using AI for real-time threat detection, vulnerability scanning, and even automated incident response. The future of cybersecurity will be an ongoing race between offensive and defensive AI.

Ultimately, securing the future of AI is not just a technical challenge; it is a human one. It requires a new level of collaboration between data scientists, security professionals, and business leaders. It demands a commitment to responsible innovation, a proactive approach to risk management, and a willingness to adapt to a rapidly changing world. The next wave of AI security risk is already upon us. The time to prepare is now.